-

Lab1

This is an introductory session to Python and a few libraries that are frequently used in this course (numpy, matplotlib, opencv, keras). -

Lab2

Implementation from scratch of a softmax classifier, trained on CIFAR-10.

Accuracy: 41.65% on validation set -

Lab3

Convolution implementation and practice with tensorflow on CIFAR-10.- serialization

- use ReLu activations and He initializer

- use regularization

- use dropout

- use cutout (custom layer implementation)

-

Lab4

"The main objective of this laboratory is to familiarize you with the training process of a neural network. More specifically, you'll follow this "recipe" for training neural networks proposed by Andrew Karpathy. You'll go through all the steps of training, data preparation, debugging, hyper-parameter tuning.In the second part of the laboratory, you'll experiment with transfer learning and fine-tuning. Transfer learning is a concept from machine learning which allows you to reuse the knowledge gained while solving a problem (in our case the CNN weights) and applying it to solve a similar problem. This is useful when you are facing a classification problem with a small training dataset."

- custom data generator

- dataset used: GTSRB - German Traffic Sign Recognition Benchmark

- experiment with ResNet blocks

- transfer learning and fine-tuning with MobileNet as base model

- data augmentation

- custom implementation of cosine annealing scheduler

- top 3 ensemble: 98.33% accuracy on validation set

-

Lab5

"In this laboratory we'll work with a semantic segmentation model. The task of semantic segmentation implies the labeling/classification of all the pixels in the input image.You'll build and train a fully convolutional neural network inspired by U-Net. Also, you will learn about how you can use various callbacks during the training of your model.

Finally, you'll implement several metrics suitable for evaluating segmentation models."

- image segmentation on OxfordPets dataset

- U-Net downsample and upsample path

- skip connection

- checkpoints, terminate on NaN, early stopping

- mean pixel accuracy: 92.32%

- intersection over union: 70.28%

- frequency weighted intersection over union: 86.83%

-

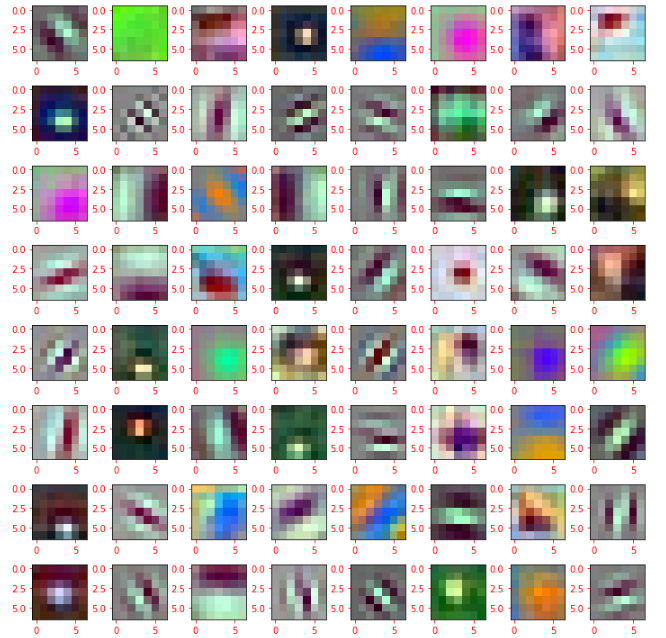

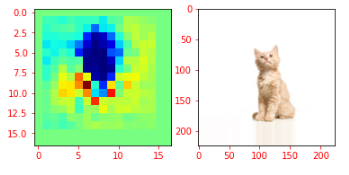

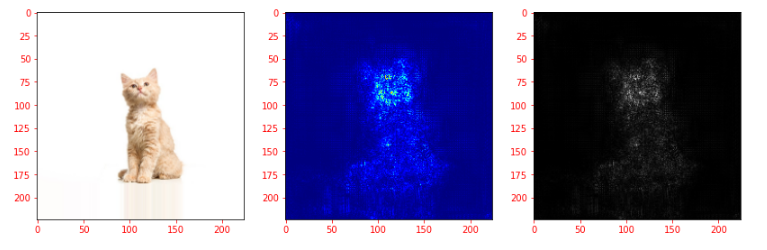

Lab6

"Visualizing what neural networks learn"

Babeș-Bolyai University

Faculty of Mathematics and Computer Science

Computer Vision and Deep Learning course

Third year