diff --git a/.Rbuildignore b/.Rbuildignore

index 1da9fa4..1e87b4d 100644

--- a/.Rbuildignore

+++ b/.Rbuildignore

@@ -39,3 +39,4 @@

^man/figures/relabel-workflow\.png$

^man/figures/sample-browser\.png$

^man/figures/settings-dialog\.png$

+^man/figures/class-review\.png$

diff --git a/DESCRIPTION b/DESCRIPTION

index 6c26539..8cb3961 100644

--- a/DESCRIPTION

+++ b/DESCRIPTION

@@ -1,6 +1,6 @@

Package: ClassiPyR

Title: A Shiny App for Manual Image Classification and Validation of IFCB Data

-Version: 0.1.1

+Version: 0.2.0

Authors@R: c(

person("Anders", "Torstensson", email = "anders.torstensson@smhi.se", role = c("aut", "cre"),

comment = c("Swedish Meteorological and Hydrological Institute", ORCID = "0000-0002-8283-656X")),

@@ -9,9 +9,10 @@ Authors@R: c(

)

Description: A Shiny application for manual classification and validation of

Imaging FlowCytobot (IFCB) plankton images. Supports loading classifications

- from CSV files or MATLAB classifier output, drag-select for batch operations,

- and exports classifications in MATLAB-compatible format for use with the

- 'ifcb-analysis' toolbox.

+ from CSV, HDF5 (.h5), or MATLAB classifier output, directly from remote

+ IFCB Dashboard instances, or via live CNN prediction through a Gradio API.

+ Features drag-select for batch operations and exports classifications in

+ MATLAB-compatible format for use with the 'ifcb-analysis' toolbox.

License: MIT + file LICENSE

Encoding: UTF-8

LazyData: true

@@ -20,7 +21,8 @@ Imports:

shinyjs,

shinyFiles,

bslib,

- iRfcb,

+ curl,

+ iRfcb (>= 0.8.1),

dplyr,

DT,

jsonlite,

@@ -30,6 +32,7 @@ Imports:

Suggests:

testthat (>= 3.0.0),

covr,

+ hdf5r,

knitr,

rmarkdown,

pkgdown

diff --git a/NAMESPACE b/NAMESPACE

index 9577f2a..7c6d311 100644

--- a/NAMESPACE

+++ b/NAMESPACE

@@ -3,12 +3,19 @@

export(copy_images_to_class_folders)

export(create_empty_changes_log)

export(create_new_classifications)

+export(download_dashboard_adc)

+export(download_dashboard_autoclass)

+export(download_dashboard_image_single)

+export(download_dashboard_images)

+export(download_dashboard_images_bulk)

+export(download_dashboard_images_individual)

export(export_all_db_to_mat)

export(export_all_db_to_png)

export(export_db_to_mat)

export(export_db_to_png)

export(filter_to_extracted)

export(get_config_dir)

+export(get_dashboard_cache_dir)

export(get_db_path)

export(get_default_db_dir)

export(get_file_index_path)

@@ -16,24 +23,33 @@ export(get_sample_paths)

export(get_settings_path)

export(import_all_mat_to_db)

export(import_mat_to_db)

+export(import_png_folder_to_db)

export(init_python_env)

export(is_valid_sample_name)

export(list_annotated_samples_db)

+export(list_annotation_metadata_db)

+export(list_classes_db)

+export(list_dashboard_bins)

export(load_annotations_db)

+export(load_class_annotations_db)

export(load_class_list)

export(load_file_index)

export(load_from_classifier_mat)

export(load_from_csv)

export(load_from_db)

+export(load_from_h5)

export(load_from_mat)

+export(parse_dashboard_url)

export(read_roi_dimensions)

export(rescan_file_index)

export(run_app)

export(sanitize_string)

export(save_annotations_db)

+export(save_class_review_changes_db)

export(save_file_index)

export(save_sample_annotations)

export(save_validation_statistics)

+export(scan_png_class_folder)

export(update_annotator)

importFrom(DBI,dbConnect)

importFrom(DBI,dbDisconnect)

@@ -43,9 +59,13 @@ importFrom(DBI,dbWriteTable)

importFrom(DT,renderDT)

importFrom(RSQLite,SQLite)

importFrom(bslib,bs_theme)

+importFrom(curl,curl_fetch_disk)

+importFrom(curl,curl_fetch_memory)

+importFrom(curl,new_handle)

importFrom(dplyr,filter)

importFrom(iRfcb,ifcb_annotate_samples)

importFrom(iRfcb,ifcb_create_manual_file)

+importFrom(iRfcb,ifcb_download_dashboard_data)

importFrom(iRfcb,ifcb_extract_pngs)

importFrom(iRfcb,ifcb_get_mat_variable)

importFrom(jsonlite,fromJSON)

diff --git a/NEWS.md b/NEWS.md

index 2db4d50..3189cca 100644

--- a/NEWS.md

+++ b/NEWS.md

@@ -1,3 +1,27 @@

+# ClassiPyR 0.2.0

+

+## New features

+

+- **Live Prediction**: Added a "Predict" button in Sample Mode that classifies all images in the loaded sample using a remote CNN model via `iRfcb::ifcb_classify_images()`. Configure the Gradio API URL and model in Settings > Live Prediction. The model dropdown is populated dynamically from the Gradio server. Predictions respect the classification threshold setting, skip manually reclassified images, and new class names from the model are added to the class list automatically. A per-image progress bar shows classification progress.

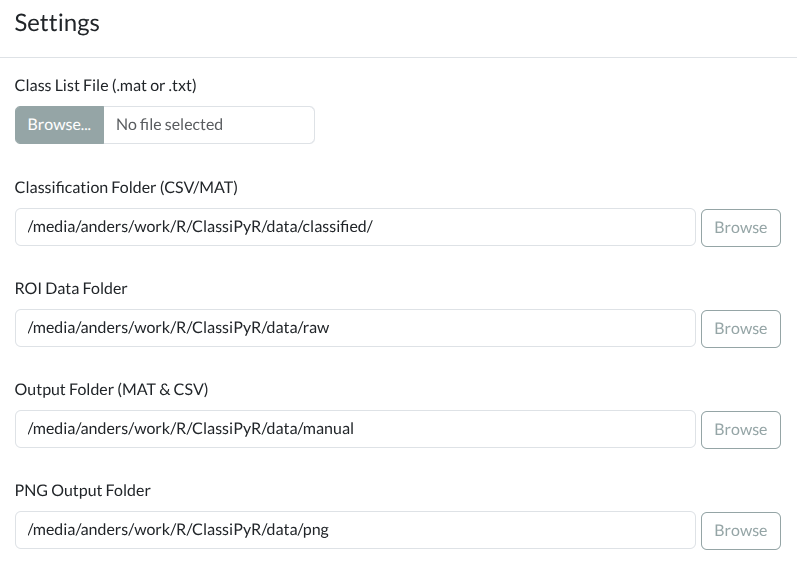

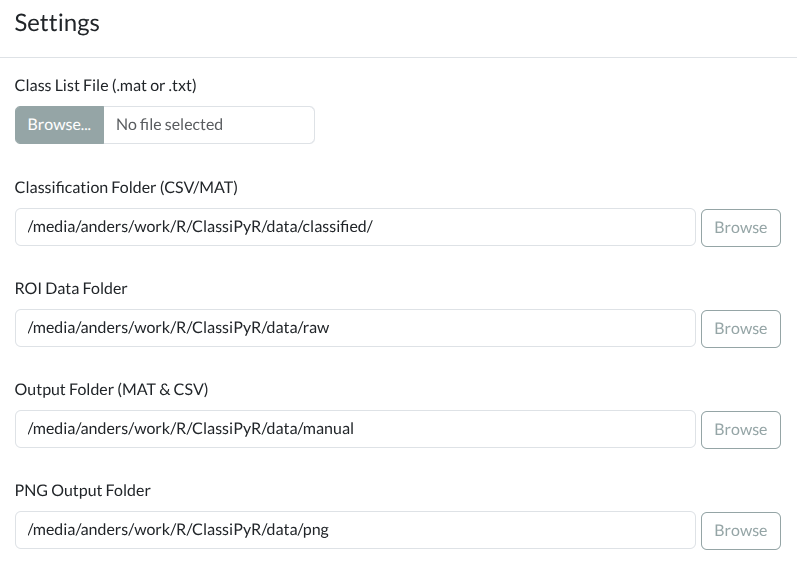

+- **IFCB Dashboard support**: Connect directly to remote IFCB Dashboard instances (e.g. `https://habon-ifcb.whoi.edu/`) without downloading data locally. Toggle between "Local Folders" and "IFCB Dashboard" in Settings, enter a Dashboard URL (with optional `?dataset=` parameter), and browse samples from the API. Images are downloaded on demand and cached locally. Optionally load dashboard auto-classifications for validation mode. Supports MAT export by downloading ADC files on demand, with graceful fallback to SQLite-only when ADC is unavailable.

+- **Dashboard class review optimization**: Class review mode with a dashboard source now downloads individual PNG images instead of entire zip archives, making it much faster when reviewing a single class across many samples.

+- **Configurable dashboard download settings**: Dashboard mode now exposes parallel downloads, sleep time, timeout, and max retries in an "Advanced Download Settings" section in Settings. Previously these were hardcoded.

+- **Local classification files in dashboard mode**: The Classification Folder setting is now available in dashboard mode. When configured, local CSV/H5/MAT classification files take priority over dashboard auto-classifications, with dashboard autoclass as a fallback.

+- New exported functions: `parse_dashboard_url()`, `list_dashboard_bins()`, `download_dashboard_images()`, `download_dashboard_images_bulk()`, `download_dashboard_image_single()`, `download_dashboard_images_individual()`, `download_dashboard_adc()`, `download_dashboard_autoclass()`, and `get_dashboard_cache_dir()` for programmatic dashboard access.

+- **Class Review Mode**: View and reclassify all annotated images of a specific class across the entire database. Switch to class review via the mode toggle in the sidebar, select a class, and load all matching images from all samples at once. Changes are saved as row-level updates to the database.

+- New exported functions: `list_classes_db()`, `load_class_annotations_db()`, and `save_class_review_changes_db()` for programmatic class review operations.

+- Added **Import PNG → SQLite** button in Settings > Import / Export. Imports annotations from a folder of PNG images organized in class-name subfolders (e.g. exported by ClassiPyR or other tools). Folder names follow the iRfcb convention where trailing `_NNN` suffixes are stripped.

+- When importing PNG folders with class names not in the current class list, a **class mapping dialog** lets users remap unmatched classes to existing ones or add them as new classes.

+- Overwrite warning dialog shown when imported samples already exist in the database.

+- New exported functions: `scan_png_class_folder()` for scanning PNG class folder structures, and `import_png_folder_to_db()` for programmatic bulk import.

+- **HDF5 classification support**: Load classifications from `.h5` files produced by [iRfcb](https://github.com/EuropeanIFCBGroup/iRfcb) (>= 0.8.0). Requires the optional `hdf5r` package.

+- **Classification threshold toggle**: New "Apply classification threshold" checkbox in Settings controls whether thresholded or raw predictions are used, for all classification formats (CSV, H5, MAT).

+- **Skip class from PNG export**: New option in Settings to exclude a specific class (e.g. "unclassified") from PNG output.

+

+## UI improvements

+

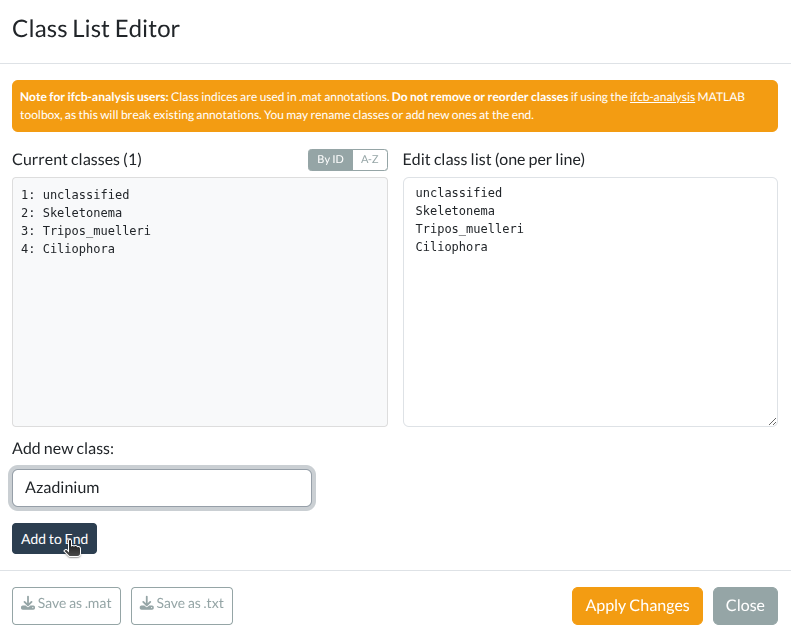

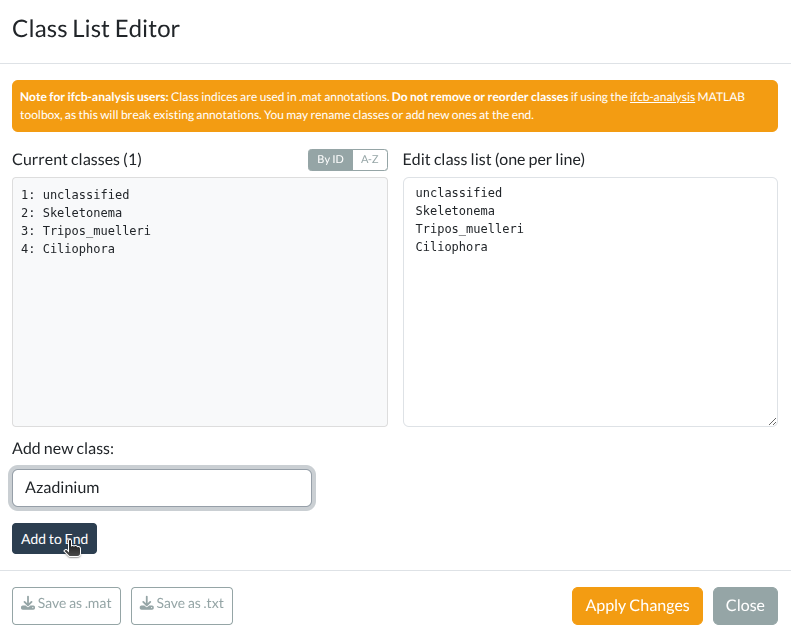

+- The **class list editor** now shows the number of annotated images per class in parentheses, queried from the SQLite database.

+

# ClassiPyR 0.1.1

## New features

diff --git a/R/dashboard.R b/R/dashboard.R

new file mode 100644

index 0000000..7be086a

--- /dev/null

+++ b/R/dashboard.R

@@ -0,0 +1,563 @@

+# Dashboard functions for ClassiPyR

+#

+# Functions for fetching sample lists and images from remote IFCB Dashboard

+# instances (e.g., https://habon-ifcb.whoi.edu/).

+

+#' @importFrom jsonlite fromJSON

+#' @importFrom curl curl_fetch_memory curl_fetch_disk new_handle

+#' @importFrom iRfcb ifcb_download_dashboard_data

+NULL

+

+#' Get persistent cache directory for dashboard downloads

+#'

+#' Returns the path to the dashboard cache directory. During R CMD check,

+#' uses a temporary directory.

+#'

+#' @return Path to the dashboard cache directory

+#' @export

+#' @examples

+#' cache_dir <- get_dashboard_cache_dir()

+#' print(cache_dir)

+get_dashboard_cache_dir <- function() {

+ if (nzchar(Sys.getenv("_R_CHECK_PACKAGE_NAME_", ""))) {

+ return(file.path(tempdir(), "ClassiPyR", "dashboard"))

+ }

+ file.path(tools::R_user_dir("ClassiPyR", "cache"), "dashboard")

+}

+

+#' Parse an IFCB Dashboard URL

+#'

+#' Extracts the base URL and optional dataset name from a Dashboard URL.

+#'

+#' @param url Character. A Dashboard URL, e.g.

+#' \code{"https://habon-ifcb.whoi.edu/"} or

+#' \code{"https://habon-ifcb.whoi.edu/timeline?dataset=tangosund"}.

+#' @return A list with \code{base_url} (without trailing slash) and

+#' \code{dataset_name} (character or NULL).

+#' @export

+#' @examples

+#' parse_dashboard_url("https://habon-ifcb.whoi.edu/")

+#' parse_dashboard_url("https://habon-ifcb.whoi.edu/timeline?dataset=tangosund")

+parse_dashboard_url <- function(url) {

+ if (is.null(url) || !is.character(url) || length(url) != 1 || !nzchar(url)) {

+ stop("url must be a non-empty character string")

+ }

+ if (!grepl("^https?://", url)) {

+ stop("url must start with http:// or https://")

+ }

+

+ # Extract dataset from query parameter ?dataset=xxx

+ dataset_name <- NULL

+ query_match <- regmatches(url, regexpr("[?&]dataset=([^&#]+)", url))

+ if (length(query_match) == 1 && nzchar(query_match)) {

+ dataset_name <- sub("^[?&]dataset=", "", query_match)

+ }

+

+ # Strip query string and path components (timeline, etc.) to get base URL

+ base_url <- sub("[?].*$", "", url)

+ base_url <- sub("/timeline/?$", "", base_url)

+ base_url <- sub("/+$", "", base_url)

+

+ list(base_url = base_url, dataset_name = dataset_name)

+}

+

+#' List bins from an IFCB Dashboard

+#'

+#' Fetches the bin list from the Dashboard API. This is a vendored copy of

+#' \code{iRfcb::ifcb_list_dashboard_bins()} from the development version that

+#' supports the \code{dataset_name} parameter.

+#'

+#' @param base_url Character. Base URL (e.g. \code{"https://habon-ifcb.whoi.edu"}).

+#' @param dataset_name Optional character. Dataset slug (e.g. \code{"tangosund"}).

+#' @return Character vector of bin (sample) names.

+#' @export

+#' @examples

+#' \donttest{

+#' bins <- list_dashboard_bins("https://ifcb-data.whoi.edu", "mvco")

+#' }

+# TODO: Replace with iRfcb::ifcb_list_dashboard_bins() once iRfcb >= 0.9.0

+# ships dataset_name support.

+list_dashboard_bins <- function(base_url, dataset_name = NULL) {

+ base_url <- sub("/+$", "", base_url)

+

+ api_url <- paste0(base_url, "/api/list_bins")

+

+ if (!is.null(dataset_name) && nzchar(dataset_name)) {

+ dataset_name <- utils::URLencode(dataset_name, reserved = TRUE)

+ api_url <- paste0(api_url, "?dataset=", dataset_name)

+ }

+

+ response <- tryCatch(

+ curl::curl_fetch_memory(api_url,

+ handle = curl::new_handle(httpheader = c(Accept = "application/json"))),

+ error = function(e) stop("Failed to connect to IFCB Dashboard API: ", e$message)

+ )

+

+ if (response$status_code != 200) {

+ stop("API request failed [", response$status_code, "]: ", api_url)

+ }

+

+ json_content <- rawToChar(response$content)

+ Encoding(json_content) <- "UTF-8"

+

+ parsed <- tryCatch(

+ jsonlite::fromJSON(json_content, flatten = TRUE),

+ error = function(e) stop("Failed to parse JSON content: ", e$message)

+ )

+

+ # The API returns a list with one element containing a data frame with a

+ # "pid" column (or similar). Extract the sample names.

+ if (is.data.frame(parsed)) {

+ bins <- parsed[[1]]

+ } else if (is.list(parsed) && length(parsed) > 0) {

+ first <- parsed[[1]]

+ if (is.data.frame(first)) {

+ bins <- first[[1]]

+ } else {

+ bins <- as.character(first)

+ }

+ } else {

+ bins <- as.character(parsed)

+ }

+

+ as.character(bins)

+}

+

+#' Download and extract PNG images from the Dashboard

+#'

+#' Downloads a zip file of PNG images for a sample from the Dashboard.

+#' Extracts into the cache directory. Skips re-download if PNGs already exist.

+#'

+#' @param base_url Character. Dashboard base URL.

+#' @param sample_name Character. Sample name (bin PID).

+#' @param cache_dir Character. Cache directory. Defaults to

+#' \code{\link{get_dashboard_cache_dir}()}.

+#' @param parallel_downloads Integer. Number of parallel downloads.

+#' @param sleep_time Numeric. Seconds to sleep between download batches.

+#' @param multi_timeout Numeric. Timeout in seconds for multi-file downloads.

+#' @param max_retries Integer. Maximum number of retry attempts.

+#' @return Path to the folder containing extracted PNGs, or NULL on failure.

+#' @export

+download_dashboard_images <- function(base_url, sample_name,

+ cache_dir = get_dashboard_cache_dir(),

+ parallel_downloads = 5, sleep_time = 2,

+ multi_timeout = 120, max_retries = 3) {

+ # Expected path structure: cache_dir/sample_name/sample_name/*.png

+ png_folder <- file.path(cache_dir, sample_name)

+ png_subfolder <- file.path(png_folder, sample_name)

+

+ # Check if PNGs already exist in cache

+ if (dir.exists(png_subfolder)) {

+ existing_pngs <- list.files(png_subfolder, pattern = "\\.png$")

+ if (length(existing_pngs) > 0) {

+ return(png_folder)

+ }

+ }

+

+ dir.create(cache_dir, recursive = TRUE, showWarnings = FALSE)

+

+ # Build the dashboard URL for download

+ # ifcb_download_dashboard_data expects a URL with a path component

+ dashboard_url <- paste0(sub("/+$", "", base_url), "/")

+

+ tryCatch({

+ ifcb_download_dashboard_data(

+ dashboard_url = dashboard_url,

+ samples = sample_name,

+ file_types = "zip",

+ dest_dir = cache_dir,

+ parallel_downloads = parallel_downloads,

+ sleep_time = sleep_time,

+ multi_timeout = multi_timeout,

+ max_retries = max_retries,

+ quiet = TRUE

+ )

+

+ # The download saves to cache_dir/DYYYYMMDD/sample_name.zip

+ # Find the zip file

+ date_part <- substr(sample_name, 1, 9)

+ zip_path <- file.path(cache_dir, date_part, paste0(sample_name, ".zip"))

+

+ if (!file.exists(zip_path)) {

+ # Try alternate location (directly in cache_dir)

+ zip_path <- file.path(cache_dir, paste0(sample_name, ".zip"))

+ }

+

+ if (!file.exists(zip_path)) {

+ warning("Zip file not found after download for: ", sample_name)

+ return(NULL)

+ }

+

+ # Extract to the expected folder structure

+ dir.create(png_subfolder, recursive = TRUE, showWarnings = FALSE)

+ utils::unzip(zip_path, exdir = png_subfolder)

+

+ # Clean up zip file

+ unlink(zip_path)

+ # Also clean up the date folder if empty

+ date_folder <- file.path(cache_dir, date_part)

+ if (dir.exists(date_folder) && length(list.files(date_folder)) == 0) {

+ unlink(date_folder, recursive = TRUE)

+ }

+

+ png_folder

+ }, error = function(e) {

+ warning("Failed to download images for ", sample_name, ": ", e$message)

+ NULL

+ })

+}

+

+#' Download ADC file from the Dashboard

+#'

+#' Downloads the ADC file for a sample from the Dashboard on demand.

+#'

+#' @param base_url Character. Dashboard base URL.

+#' @param sample_name Character. Sample name.

+#' @param cache_dir Character. Cache directory.

+#' @param parallel_downloads Integer. Number of parallel downloads.

+#' @param sleep_time Numeric. Seconds to sleep between download batches.

+#' @param multi_timeout Numeric. Timeout in seconds for multi-file downloads.

+#' @param max_retries Integer. Maximum number of retry attempts.

+#' @return Path to the downloaded ADC file, or NULL on failure.

+#' @export

+download_dashboard_adc <- function(base_url, sample_name,

+ cache_dir = get_dashboard_cache_dir(),

+ parallel_downloads = 5, sleep_time = 2,

+ multi_timeout = 120, max_retries = 3) {

+ date_part <- substr(sample_name, 1, 9)

+ adc_path <- file.path(cache_dir, date_part, paste0(sample_name, ".adc"))

+

+ if (file.exists(adc_path)) {

+ return(adc_path)

+ }

+

+ dashboard_url <- paste0(sub("/+$", "", base_url), "/")

+

+ tryCatch({

+ ifcb_download_dashboard_data(

+ dashboard_url = dashboard_url,

+ samples = sample_name,

+ file_types = "adc",

+ dest_dir = cache_dir,

+ parallel_downloads = parallel_downloads,

+ sleep_time = sleep_time,

+ multi_timeout = multi_timeout,

+ max_retries = max_retries,

+ quiet = TRUE

+ )

+

+ if (file.exists(adc_path)) {

+ return(adc_path)

+ }

+

+ # Try alternate location

+ alt_path <- file.path(cache_dir, paste0(sample_name, ".adc"))

+ if (file.exists(alt_path)) {

+ return(alt_path)

+ }

+

+ NULL

+ }, error = function(e) {

+ warning("Failed to download ADC for ", sample_name, ": ", e$message)

+ NULL

+ })

+}

+

+#' Download and parse autoclass scores from the Dashboard

+#'

+#' Downloads \code{_class_scores.csv} for a sample and extracts the winning

+#' class (column with max score) per ROI.

+#'

+#' @param base_url Character. Dashboard base URL.

+#' @param sample_name Character. Sample name.

+#' @param cache_dir Character. Cache directory.

+#' @param parallel_downloads Integer. Number of parallel downloads.

+#' @param sleep_time Numeric. Seconds to sleep between download batches.

+#' @param multi_timeout Numeric. Timeout in seconds for multi-file downloads.

+#' @param max_retries Integer. Maximum number of retry attempts.

+#' @return Data frame with columns \code{file_name}, \code{class_name},

+#' \code{score}, or NULL on failure.

+#' @export

+download_dashboard_autoclass <- function(base_url, sample_name,

+ cache_dir = get_dashboard_cache_dir(),

+ parallel_downloads = 5, sleep_time = 2,

+ multi_timeout = 120, max_retries = 3) {

+ # The dashboard URL needs to include the dataset path for autoclass

+ dashboard_url <- paste0(sub("/+$", "", base_url), "/")

+

+ tryCatch({

+ ifcb_download_dashboard_data(

+ dashboard_url = dashboard_url,

+ samples = sample_name,

+ file_types = "autoclass",

+ dest_dir = cache_dir,

+ parallel_downloads = parallel_downloads,

+ sleep_time = sleep_time,

+ multi_timeout = multi_timeout,

+ max_retries = max_retries,

+ quiet = TRUE

+ )

+

+ # Find the downloaded CSV file - may have a version suffix

+ csv_pattern <- paste0("^", sample_name, "_class.*\\.csv$")

+ csv_files <- list.files(cache_dir, pattern = csv_pattern, recursive = TRUE,

+ full.names = TRUE)

+

+ if (length(csv_files) == 0) {

+ return(NULL)

+ }

+

+ csv_path <- csv_files[1]

+

+ # Parse the score matrix CSV

+ # Rows = ROIs, columns = class names, values = scores

+ scores <- utils::read.csv(csv_path, check.names = FALSE)

+

+ if (nrow(scores) == 0 || ncol(scores) < 2) {

+ return(NULL)

+ }

+

+ # The first column is typically the ROI identifier

+ # Check if the first column looks like a ROI ID (numeric or sample_NNNNN)

+ first_col <- scores[[1]]

+ class_cols <- if (is.numeric(first_col) || all(grepl("^\\d+$|_\\d+$", as.character(first_col)))) {

+ # First column is ROI identifier, class scores start at column 2

+ names(scores)[-1]

+ } else {

+ names(scores)

+ }

+

+ score_matrix <- as.matrix(scores[, class_cols, drop = FALSE])

+

+ # Extract winning class per ROI

+ max_idx <- apply(score_matrix, 1, which.max)

+ max_scores <- apply(score_matrix, 1, max)

+ winning_classes <- class_cols[max_idx]

+

+ # Extract file names from pid column (e.g., "D20190402T200352_IFCB010_00001")

+ # or fall back to sequential ROI numbers if pid is not available

+ if (is.character(first_col) && all(grepl("_\\d+$", first_col))) {

+ # pid column contains full identifiers — use them directly

+ file_names <- paste0(first_col, ".png")

+ } else if (is.numeric(first_col) || all(grepl("^\\d+$", as.character(first_col)))) {

+ # First column is numeric ROI numbers

+ roi_numbers <- as.integer(first_col)

+ file_names <- sprintf("%s_%05d.png", sample_name, roi_numbers)

+ } else {

+ # Fallback: sequential numbering

+ file_names <- sprintf("%s_%05d.png", sample_name, seq_len(nrow(scores)))

+ }

+

+ data.frame(

+ file_name = file_names,

+ class_name = winning_classes,

+ score = max_scores,

+ stringsAsFactors = FALSE

+ )

+ }, error = function(e) {

+ warning("Failed to download autoclass for ", sample_name, ": ", e$message)

+ NULL

+ })

+}

+

+#' Bulk download zip archives for multiple samples from the Dashboard

+#'

+#' Downloads zip files for all specified samples in a single batched call

+#' to \code{\link[iRfcb]{ifcb_download_dashboard_data}}, leveraging its

+#' built-in parallel download support. Samples already cached are skipped.

+#' After download, zips are extracted and cleaned up.

+#'

+#' @param base_url Character. Dashboard base URL.

+#' @param sample_names Character vector. Sample names to download.

+#' @param cache_dir Character. Cache directory.

+#' @param parallel_downloads Integer. Number of parallel downloads.

+#' @param sleep_time Numeric. Seconds to sleep between download batches.

+#' @param multi_timeout Numeric. Timeout in seconds for multi-file downloads.

+#' @param max_retries Integer. Maximum number of retry attempts.

+#' @return Character vector of sample names that were successfully downloaded

+#' or already cached.

+#' @export

+download_dashboard_images_bulk <- function(base_url, sample_names,

+ cache_dir = get_dashboard_cache_dir(),

+ parallel_downloads = 5, sleep_time = 2,

+ multi_timeout = 120, max_retries = 3) {

+ dir.create(cache_dir, recursive = TRUE, showWarnings = FALSE)

+ dashboard_url <- paste0(sub("/+$", "", base_url), "/")

+

+ # Determine which samples need downloading (not already cached)

+ needs_download <- vapply(sample_names, function(sn) {

+ png_subfolder <- file.path(cache_dir, sn, sn)

+ !(dir.exists(png_subfolder) &&

+ length(list.files(png_subfolder, pattern = "\\.png$")) > 0)

+ }, logical(1))

+

+ to_download <- sample_names[needs_download]

+

+ if (length(to_download) > 0) {

+ tryCatch({

+ ifcb_download_dashboard_data(

+ dashboard_url = dashboard_url,

+ samples = to_download,

+ file_types = "zip",

+ dest_dir = cache_dir,

+ parallel_downloads = parallel_downloads,

+ sleep_time = sleep_time,

+ multi_timeout = multi_timeout,

+ max_retries = max_retries,

+ quiet = TRUE

+ )

+ }, error = function(e) {

+ warning("Bulk zip download failed: ", e$message)

+ })

+

+ # Extract each downloaded zip into the expected folder structure

+ for (sn in to_download) {

+ date_part <- substr(sn, 1, 9)

+ zip_path <- file.path(cache_dir, date_part, paste0(sn, ".zip"))

+

+ if (!file.exists(zip_path)) {

+ zip_path <- file.path(cache_dir, paste0(sn, ".zip"))

+ }

+ if (!file.exists(zip_path)) next

+

+ png_subfolder <- file.path(cache_dir, sn, sn)

+ dir.create(png_subfolder, recursive = TRUE, showWarnings = FALSE)

+ tryCatch(utils::unzip(zip_path, exdir = png_subfolder), error = function(e) NULL)

+ unlink(zip_path)

+

+ # Clean up empty date folder

+ date_folder <- file.path(cache_dir, date_part)

+ if (dir.exists(date_folder) && length(list.files(date_folder)) == 0) {

+ unlink(date_folder, recursive = TRUE)

+ }

+ }

+ }

+

+ # Return all samples that are now cached

+ cached_ok <- vapply(sample_names, function(sn) {

+ png_subfolder <- file.path(cache_dir, sn, sn)

+ dir.exists(png_subfolder) &&

+ length(list.files(png_subfolder, pattern = "\\.png$")) > 0

+ }, logical(1))

+

+ sample_names[cached_ok]

+}

+

+#' Download a single PNG image from the Dashboard

+#'

+#' Downloads one PNG from the Dashboard's \code{/data/} endpoint.

+#' The image is saved to \code{dest_dir/sample_name/file_name}.

+#'

+#' @param base_url Character. Dashboard base URL.

+#' @param sample_name Character. Sample name (bin PID).

+#' @param roi_number Integer. ROI number to download.

+#' @param dest_dir Character. Destination directory.

+#' @param max_retries Integer. Maximum number of retry attempts.

+#' @param timeout Numeric. Request timeout in seconds.

+#' @return File path to the downloaded PNG, or NULL on failure.

+#' @export

+download_dashboard_image_single <- function(base_url, sample_name, roi_number,

+ dest_dir, max_retries = 3,

+ timeout = 15) {

+ file_name <- sprintf("%s_%05d.png", sample_name, roi_number)

+ dest_folder <- file.path(dest_dir, sample_name)

+ dest_path <- file.path(dest_folder, file_name)

+

+ if (file.exists(dest_path)) {

+ return(dest_path)

+ }

+

+ dir.create(dest_folder, recursive = TRUE, showWarnings = FALSE)

+

+ img_url <- paste0(sub("/+$", "", base_url), "/data/", file_name)

+

+ for (attempt in seq_len(max_retries)) {

+ result <- tryCatch({

+ h <- curl::new_handle()

+ curl::handle_setopt(h, connecttimeout = 10, timeout = timeout)

+ response <- curl::curl_fetch_disk(img_url, dest_path, handle = h)

+ if (response$status_code == 200 && file.exists(dest_path) &&

+ file.info(dest_path)$size > 0) {

+ return(dest_path)

+ }

+ # Non-200 status or empty file — no point retrying a 404

+ if (file.exists(dest_path)) unlink(dest_path)

+ if (response$status_code %in% c(404L, 410L)) return(NULL)

+ NULL

+ }, error = function(e) {

+ if (file.exists(dest_path)) unlink(dest_path)

+ NULL

+ })

+

+ if (!is.null(result)) return(result)

+ if (attempt < max_retries) Sys.sleep(0.5)

+ }

+

+ NULL

+}

+

+#' Download individual PNG images from the Dashboard

+#'

+#' Downloads specific PNG files from the Dashboard's \code{/data/} endpoint,

+#' one at a time. This is much faster than downloading entire zip archives

+#' when only a subset of ROIs are needed (e.g., class review mode).

+#'

+#' Samples that fail repeatedly are automatically skipped to avoid long

+#' waits when annotations reference samples not available on the dashboard.

+#'

+#' @param base_url Character. Dashboard base URL.

+#' @param file_names Character vector. PNG file names

+#' (e.g., \code{"D20240716T000431_IFCB134_00108.png"}).

+#' @param dest_dir Character. Destination directory.

+#' @param max_retries Integer. Maximum number of retry attempts per image.

+#' @param sample_fail_threshold Integer. After this many consecutive failures

+#' from the same sample, skip all remaining images from that sample.

+#' @return Character vector of successfully downloaded file names.

+#' @export

+download_dashboard_images_individual <- function(base_url, file_names, dest_dir,

+ max_retries = 3,

+ sample_fail_threshold = 2) {

+ dir.create(dest_dir, recursive = TRUE, showWarnings = FALSE)

+

+ succeeded <- character()

+ # Track consecutive failures per sample to skip unavailable samples early

+ sample_failures <- list()

+ skipped_samples <- character()

+

+ for (fname in file_names) {

+ # Parse sample_name and roi_number from file_name

+ parts <- regmatches(fname, regexec("^(.+)_(\\d+)\\.png$", fname))[[1]]

+ if (length(parts) < 3) next

+

+ sample_name <- parts[2]

+ roi_number <- as.integer(parts[3])

+

+ # Skip samples that have already been marked as unavailable

+ if (sample_name %in% skipped_samples) next

+

+ result <- download_dashboard_image_single(

+ base_url = base_url,

+ sample_name = sample_name,

+ roi_number = roi_number,

+ dest_dir = dest_dir,

+ max_retries = max_retries

+ )

+

+ if (!is.null(result)) {

+ succeeded <- c(succeeded, fname)

+ # Reset failure counter on success

+ sample_failures[[sample_name]] <- 0L

+ } else {

+ prev <- sample_failures[[sample_name]]

+ count <- (if (is.null(prev)) 0L else prev) + 1L

+ sample_failures[[sample_name]] <- count

+ if (count >= sample_fail_threshold) {

+ skipped_samples <- c(skipped_samples, sample_name)

+ warning("Skipping remaining images from ", sample_name,

+ " (", count, " consecutive failures)", call. = FALSE)

+ }

+ }

+ }

+

+ succeeded

+}

diff --git a/R/database.R b/R/database.R

index 536a218..31fd40a 100644

--- a/R/database.R

+++ b/R/database.R

@@ -601,6 +601,79 @@ export_db_to_png <- function(db_path, sample_name, roi_path, png_folder,

})

}

+#' Import annotations from a PNG class folder into the SQLite database

+#'

+#' Scans a folder of PNG images organized in class-name subfolders (via

+#' \code{\link{scan_png_class_folder}}) and imports the annotations into the

+#' database. An optional \code{class_mapping} named vector remaps class names

+#' before saving.

+#'

+#' @param png_folder Path to the top-level folder containing class subfolders

+#' @param db_path Path to the SQLite database file

+#' @param class2use Character vector of class names (preserves index order for

+#' .mat export)

+#' @param class_mapping Optional named character vector mapping scanned class

+#' names to target class names. Names are the source classes, values are the

+#' target classes. Classes not in the mapping are kept as-is.

+#' @param annotator Annotator name (defaults to \code{"imported"})

+#' @return Named list with counts: \code{success}, \code{failed}

+#' @export

+#' @examples

+#' \dontrun{

+#' db_path <- get_db_path("/data/manual")

+#' class2use <- c("Diatom", "Dinoflagellate", "Ciliate")

+#' result <- import_png_folder_to_db(

+#' "/data/png_export", db_path, class2use,

+#' class_mapping = c("OldName" = "NewName"),

+#' annotator = "Jane"

+#' )

+#' cat(result$success, "imported,", result$failed, "failed\n")

+#' }

+import_png_folder_to_db <- function(png_folder, db_path, class2use,

+ class_mapping = NULL,

+ annotator = "imported") {

+ scan_result <- scan_png_class_folder(png_folder)

+

+ counts <- list(success = 0L, failed = 0L)

+

+ if (nrow(scan_result$annotations) == 0) {

+ return(counts)

+ }

+

+ annotations <- scan_result$annotations

+

+ # Apply class mapping if provided

+ if (!is.null(class_mapping) && length(class_mapping) > 0) {

+ mapped <- class_mapping[annotations$class_name]

+ has_mapping <- !is.na(mapped)

+ annotations$class_name[has_mapping] <- mapped[has_mapping]

+ }

+

+ # Group by sample_name and save each sample

+

+ sample_names <- unique(annotations$sample_name)

+

+ for (sn in sample_names) {

+ sample_rows <- annotations[annotations$sample_name == sn, ]

+

+ classifications <- data.frame(

+ file_name = sample_rows$file_name,

+ class_name = sample_rows$class_name,

+ stringsAsFactors = FALSE

+ )

+

+ ok <- save_annotations_db(db_path, sn, classifications, class2use,

+ annotator)

+ if (isTRUE(ok)) {

+ counts$success <- counts$success + 1L

+ } else {

+ counts$failed <- counts$failed + 1L

+ }

+ }

+

+ counts

+}

+

#' Bulk export all annotated samples from SQLite to class-organized PNGs

#'

#' Exports every annotated sample in the database to PNG images organized

@@ -650,3 +723,273 @@ export_all_db_to_png <- function(db_path, png_folder, roi_path_map,

counts

}

+

+#' List all classes with counts in the annotations database

+#'

+#' Queries the database for distinct class names and their annotation counts.

+#' Useful for populating class review mode dropdowns. Optional filters restrict

+#' results to annotations matching a given year, month, or instrument.

+#'

+#' @param db_path Path to the SQLite database file

+#' @param year Optional year filter (e.g. \code{"2023"}). When not \code{"all"}

+#' or \code{NULL}, restricts to sample names starting with \code{DYYYY}.

+#' @param month Optional month filter (e.g. \code{"03"}). When not \code{"all"}

+#' or \code{NULL}, restricts to sample names with that month at positions 6-7.

+#' @param instrument Optional instrument filter (e.g. \code{"IFCB134"}). When

+#' not \code{"all"} or \code{NULL}, restricts to sample names ending with

+#' \code{_INSTRUMENT}.

+#' @param annotator Optional annotator name filter (e.g. \code{"Jane"}). When

+#' not \code{"all"} or \code{NULL}, restricts to annotations by that annotator.

+#' @return Data frame with columns \code{class_name} and \code{count}, ordered

+#' alphabetically by class name. Returns an empty data frame if the database

+#' does not exist or has no annotations.

+#' @export

+#' @examples

+#' \dontrun{

+#' db_path <- get_db_path("/data/manual")

+#' classes <- list_classes_db(db_path)

+#' classes_2023 <- list_classes_db(db_path, year = "2023")

+#' }

+list_classes_db <- function(db_path, year = NULL, month = NULL,

+ instrument = NULL, annotator = NULL) {

+ empty <- data.frame(class_name = character(), count = integer(),

+ stringsAsFactors = FALSE)

+

+ if (!file.exists(db_path)) {

+ return(empty)

+ }

+

+ con <- dbConnect(SQLite(), db_path)

+ on.exit(dbDisconnect(con), add = TRUE)

+

+ tables <- dbGetQuery(con, "SELECT name FROM sqlite_master WHERE type='table'")

+ if (!"annotations" %in% tables$name) {

+ return(empty)

+ }

+

+ where <- build_sample_filter_clause(year, month, instrument,

+ annotator = annotator)

+

+ sql <- paste0(

+ "SELECT class_name, COUNT(*) AS count FROM annotations",

+ where$clause,

+ " GROUP BY class_name ORDER BY class_name"

+ )

+

+ if (length(where$params) > 0) {

+ dbGetQuery(con, sql, params = where$params)

+ } else {

+ dbGetQuery(con, sql)

+ }

+}

+

+#' Load all annotations for a specific class from the database

+#'

+#' Returns every annotation matching \code{class_name}, with a computed

+#' \code{file_name} column for gallery display. Optional filters restrict

+#' results by year, month, or instrument.

+#'

+#' @param db_path Path to the SQLite database file

+#' @param class_name Class name to load

+#' @param year Optional year filter (e.g. \code{"2023"})

+#' @param month Optional month filter (e.g. \code{"03"})

+#' @param instrument Optional instrument filter (e.g. \code{"IFCB134"})

+#' @param annotator Optional annotator name filter (e.g. \code{"Jane"})

+#' @return Data frame with columns \code{sample_name}, \code{roi_number},

+#' \code{class_name}, and \code{file_name}. Returns \code{NULL} if no

+#' annotations match.

+#' @export

+#' @examples

+#' \dontrun{

+#' db_path <- get_db_path("/data/manual")

+#' diatoms <- load_class_annotations_db(db_path, "Diatom")

+#' diatoms_2023 <- load_class_annotations_db(db_path, "Diatom", year = "2023")

+#' }

+load_class_annotations_db <- function(db_path, class_name, year = NULL,

+ month = NULL, instrument = NULL,

+ annotator = NULL) {

+ if (!file.exists(db_path)) {

+ return(NULL)

+ }

+

+ con <- dbConnect(SQLite(), db_path)

+ on.exit(dbDisconnect(con), add = TRUE)

+

+ where <- build_sample_filter_clause(year, month, instrument,

+ annotator = annotator)

+ params <- c(list(class_name), where$params)

+

+ rows <- dbGetQuery(con, paste0(

+ "SELECT sample_name, roi_number, class_name FROM annotations WHERE class_name = ?",

+ if (nzchar(where$clause)) gsub("^ WHERE ", " AND ", where$clause),

+ " ORDER BY sample_name, roi_number"

+ ), params = params)

+

+ if (nrow(rows) == 0) {

+ return(NULL)

+ }

+

+ rows$file_name <- sprintf("%s_%05d.png", rows$sample_name, rows$roi_number)

+ rows

+}

+

+#' Save class review changes to the database

+#'

+#' Performs row-level UPDATEs for reclassified images identified during class

+#' review mode. Only the changed rows are updated; other annotations for the

+#' same samples are left untouched.

+#'

+#' @param db_path Path to the SQLite database file

+#' @param changes_df Data frame with columns \code{sample_name},

+#' \code{roi_number}, and \code{new_class_name}

+#' @param annotator Annotator name

+#' @return Integer count of rows updated

+#' @export

+#' @examples

+#' \dontrun{

+#' db_path <- get_db_path("/data/manual")

+#' changes <- data.frame(

+#' sample_name = "D20230101T120000_IFCB134",

+#' roi_number = 5L,

+#' new_class_name = "Ciliate"

+#' )

+#' save_class_review_changes_db(db_path, changes, "Jane")

+#' }

+save_class_review_changes_db <- function(db_path, changes_df, annotator) {

+ if (is.null(changes_df) || nrow(changes_df) == 0) {

+ return(0L)

+ }

+

+ con <- dbConnect(SQLite(), db_path)

+ on.exit(dbDisconnect(con), add = TRUE)

+

+ init_db_schema(con)

+

+ timestamp <- format(Sys.time(), "%Y-%m-%d %H:%M:%S")

+ updated <- 0L

+

+ tryCatch({

+ dbExecute(con, "BEGIN TRANSACTION")

+

+ for (i in seq_len(nrow(changes_df))) {

+ n <- dbExecute(con,

+ "UPDATE annotations SET class_name = ?, annotator = ?, timestamp = ?, is_manual = 1 WHERE sample_name = ? AND roi_number = ?",

+ params = list(

+ changes_df$new_class_name[i],

+ annotator,

+ timestamp,

+ changes_df$sample_name[i],

+ changes_df$roi_number[i]

+ )

+ )

+ updated <- updated + as.integer(n)

+ }

+

+ dbExecute(con, "COMMIT")

+ updated

+ }, error = function(e) {

+ tryCatch(dbExecute(con, "ROLLBACK"), error = function(e2) NULL)

+ warning("Failed to save class review changes: ", e$message)

+ 0L

+ })

+}

+

+#' List distinct years, months, and instruments from annotations

+#'

+#' Extracts metadata from sample names in the annotations table for use as

+#' filter options. Sample names follow the IFCB naming convention

+#' \code{DYYYYMMDDTHHMMSS_INSTRUMENT}.

+#'

+#' @param db_path Path to the SQLite database file

+#' @return A list with character vectors: \code{years}, \code{months},

+#' \code{instruments}, and \code{annotators}. Returns empty vectors if the

+#' database does not exist or has no annotations.

+#' @export

+#' @examples

+#' \dontrun{

+#' db_path <- get_db_path("/data/manual")

+#' meta <- list_annotation_metadata_db(db_path)

+#' meta$years # e.g. c("2022", "2023")

+#' meta$months # e.g. c("01", "06", "12")

+#' meta$instruments # e.g. c("IFCB134", "IFCB135")

+#' meta$annotators # e.g. c("Jane", "imported")

+#' }

+list_annotation_metadata_db <- function(db_path) {

+ empty <- list(years = character(), months = character(),

+ instruments = character(), annotators = character())

+

+ if (!file.exists(db_path)) {

+ return(empty)

+ }

+

+ con <- dbConnect(SQLite(), db_path)

+ on.exit(dbDisconnect(con), add = TRUE)

+

+ tables <- dbGetQuery(con, "SELECT name FROM sqlite_master WHERE type='table'")

+ if (!"annotations" %in% tables$name) {

+ return(empty)

+ }

+

+ samples <- dbGetQuery(con,

+ "SELECT DISTINCT sample_name FROM annotations"

+ )$sample_name

+

+ annotators <- sort(dbGetQuery(con,

+ "SELECT DISTINCT annotator FROM annotations WHERE annotator IS NOT NULL"

+ )$annotator)

+

+ if (length(samples) == 0) {

+ return(list(years = character(), months = character(),

+ instruments = character(), annotators = annotators))

+ }

+

+ years <- sort(unique(substr(samples, 2, 5)))

+ months <- sort(unique(substr(samples, 6, 7)))

+ instruments <- sort(unique(sub(".*_", "", samples)))

+

+ list(years = years, months = months, instruments = instruments,

+ annotators = annotators)

+}

+

+# Build WHERE clause fragments for sample_name filtering

+#

+# @param year Year string or "all"/NULL

+# @param month Month string or "all"/NULL

+# @param instrument Instrument string or "all"/NULL

+# @return List with `clause` (SQL fragment starting with " WHERE " or "") and

+# `params` (list of bind values)

+# @keywords internal

+build_sample_filter_clause <- function(year = NULL, month = NULL,

+ instrument = NULL,

+ annotator = NULL) {

+ conditions <- character()

+ params <- list()

+

+ if (!is.null(year) && year != "all") {

+ conditions <- c(conditions, "sample_name LIKE ?")

+ params <- c(params, list(paste0("D", year, "%")))

+ }

+

+ if (!is.null(month) && month != "all") {

+ conditions <- c(conditions, "sample_name LIKE ?")

+ params <- c(params, list(paste0("D____", month, "%")))

+ }

+

+ if (!is.null(instrument) && instrument != "all") {

+ conditions <- c(conditions, "sample_name LIKE ?")

+ params <- c(params, list(paste0("%_", instrument)))

+ }

+

+ if (!is.null(annotator) && annotator != "all") {

+ conditions <- c(conditions, "annotator = ?")

+ params <- c(params, list(annotator))

+ }

+

+ clause <- if (length(conditions) > 0) {

+ paste0(" WHERE ", paste(conditions, collapse = " AND "))

+ } else {

+ ""

+ }

+

+ list(clause = clause, params = params)

+}

diff --git a/R/sample_loading.R b/R/sample_loading.R

index 0f97e9f..6066db9 100644

--- a/R/sample_loading.R

+++ b/R/sample_loading.R

@@ -15,9 +15,12 @@ NULL

#' \item{class_name}{Predicted class name (e.g., `Diatom`).}

#' }

#'

-#' An optional column may also be included:

+#' Optional columns may also be included:

#' \describe{

#' \item{score}{Classification confidence value between 0 and 1.}

+#' \item{class_name_auto}{Raw (unthresholded) class prediction. When

+#' \code{use_threshold = FALSE} and this column exists, its values are

+#' used as \code{class_name}.}

#' }

#'

#' The CSV file must be named after the sample it describes

@@ -25,6 +28,9 @@ NULL

#' Folder configured in the app (subfolders are searched recursively).

#'

#' @param csv_path Path to classification CSV file

+#' @param use_threshold Logical, whether to use the threshold-filtered

+#' \code{class_name} column (default \code{TRUE}) or the raw

+#' \code{class_name_auto} column when available.

#' @return Data frame with classifications. Expected columns: `file_name`,

#' `class_name`, and optionally `score`.

#' @export

@@ -34,9 +40,15 @@ NULL

#' classifications <- load_from_csv("/path/to/D20230101T120000_IFCB134.csv")

#' head(classifications)

#' }

-load_from_csv <- function(csv_path) {

+load_from_csv <- function(csv_path, use_threshold = TRUE) {

classifications <- utils::read.csv(csv_path, stringsAsFactors = FALSE)

+ # When threshold is off and class_name_auto exists, use raw predictions

+

+ if (!use_threshold && "class_name_auto" %in% names(classifications)) {

+ classifications$class_name <- classifications$class_name_auto

+ }

+

# Strip trailing 3-digit suffix from class names (e.g., "Diatom_001" -> "Diatom")

# This matches iRfcb behavior where class folders may include numeric suffixes

classifications$class_name <- sub("_\\d{3}$", "", classifications$class_name)

@@ -55,6 +67,80 @@ load_from_csv <- function(csv_path) {

classifications

}

+#' Load classifications from HDF5 classifier output file

+#'

+#' Reads an HDF5 classifier output file (from iRfcb 0.8.0+) and extracts

+#' class predictions. Requires the \pkg{hdf5r} package.

+#'

+#' @param h5_path Path to classifier H5 file (matching pattern *_class*.h5)

+#' @param sample_name Sample name (e.g., "D20220522T000439_IFCB134")

+#' @param roi_dimensions Data frame from \code{\link{read_roi_dimensions}}

+#' @param use_threshold Logical, whether to use the threshold-filtered

+#' \code{class_name} dataset (default \code{TRUE}) or the raw

+#' \code{class_name_auto} dataset.

+#' @return Data frame with columns: file_name, class_name, score, width, height,

+#' roi_area

+#' @export

+#' @examples

+#' \dontrun{

+#' dims <- read_roi_dimensions("/data/raw/2022/D20220522/D20220522T000439_IFCB134.adc")

+#' classifications <- load_from_h5(

+#' h5_path = "/data/classified/D20220522T000439_IFCB134_class.h5",

+#' sample_name = "D20220522T000439_IFCB134",

+#' roi_dimensions = dims,

+#' use_threshold = TRUE

+#' )

+#' head(classifications)

+#' }

+load_from_h5 <- function(h5_path, sample_name, roi_dimensions, use_threshold = TRUE) {

+ if (!requireNamespace("hdf5r", quietly = TRUE)) {

+ stop("Package 'hdf5r' is required to read H5 classification files. ",

+ "Install it with: install.packages('hdf5r')")

+ }

+

+ h5 <- hdf5r::H5File$new(h5_path, "r")

+ on.exit(h5$close_all(), add = TRUE)

+

+ roi_numbers <- h5[["roi_numbers"]]$read()

+

+ if (use_threshold) {

+ class_names <- h5[["class_name"]]$read()

+ } else {

+ class_names <- h5[["class_name_auto"]]$read()

+ }

+

+ class_names[is.na(class_names)] <- "unclassified"

+

+ # Extract per-ROI max score from output_scores matrix (num_classes x num_rois)

+ output_scores <- h5[["output_scores"]]$read()

+ scores <- apply(output_scores, 2, max)

+

+ # Match ROI dimensions

+ roi_data <- lapply(roi_numbers, function(rn) {

+ idx <- which(roi_dimensions$roi_number == rn)

+ if (length(idx) > 0) {

+ list(width = roi_dimensions$width[idx],

+ height = roi_dimensions$height[idx],

+ area = roi_dimensions$area[idx])

+ } else {

+ list(width = NA_real_, height = NA_real_, area = NA_real_)

+ }

+ })

+

+ classifications <- data.frame(

+ file_name = sprintf("%s_%05d.png", sample_name, roi_numbers),

+ class_name = class_names,

+ score = scores,

+ width = vapply(roi_data, `[[`, numeric(1), "width"),

+ height = vapply(roi_data, `[[`, numeric(1), "height"),

+ roi_area = vapply(roi_data, `[[`, numeric(1), "area"),

+ stringsAsFactors = FALSE

+ )

+

+ # Sort by area (descending)

+ classifications[order(-classifications$roi_area), ]

+}

+

#' Load classifications from SQLite database

#'

#' Reads annotations for a sample from the SQLite database and returns a data

@@ -257,6 +343,111 @@ create_new_classifications <- function(sample_name, roi_dimensions) {

classifications[order(-classifications$roi_area), ]

}

+#' Scan a PNG folder with class subfolders

+#'

+#' Scans a directory containing PNG images organized into class-name

+#' subfolders (e.g. as exported by \code{\link{export_db_to_png}} or other

+#' tools). Folder names follow the iRfcb convention where a trailing 3-digit

+#' suffix is stripped (e.g. \code{Diatom_001} becomes \code{Diatom}).

+#'

+#' @param png_folder Path to the top-level folder containing class subfolders

+#' @return A list with components:

+#' \describe{

+#' \item{annotations}{Data frame with columns \code{sample_name},

+#' \code{roi_number}, \code{file_name}, and \code{class_name}}

+#' \item{classes_found}{Character vector of unique class names found}

+#' \item{sample_names}{Character vector of unique sample names found}

+#' }

+#' @export

+#' @examples

+#' \dontrun{

+#' result <- scan_png_class_folder("/data/png_export")

+#' head(result$annotations)

+#' result$classes_found

+#' result$sample_names

+#' }

+scan_png_class_folder <- function(png_folder) {

+ if (!dir.exists(png_folder)) {

+ stop("PNG folder does not exist: ", png_folder)

+ }

+

+ subdirs <- list.dirs(png_folder, recursive = FALSE, full.names = TRUE)

+

+ if (length(subdirs) == 0) {

+ return(list(

+ annotations = data.frame(

+ sample_name = character(),

+ roi_number = integer(),

+ file_name = character(),

+ class_name = character(),

+ stringsAsFactors = FALSE

+ ),

+ classes_found = character(),

+ sample_names = character()

+ ))

+ }

+

+ all_rows <- list()

+ seen_rois <- list()

+

+ for (subdir in subdirs) {

+ class_name <- sub("_\\d{3}$", "", basename(subdir))

+ png_files <- list.files(subdir, pattern = "\\.png$", full.names = FALSE)

+

+ for (fn in png_files) {

+ # Parse sample_name and roi_number from filename

+ # Expected format: SampleName_NNNNN.png (5-digit ROI number)

+ m <- regmatches(fn, regexec("^(.+)_(\\d{5})\\.png$", fn))[[1]]

+ if (length(m) < 3) {

+ warning("Skipping file with unexpected name format: ", fn)

+ next

+ }

+

+ sample_name <- m[2]

+ roi_number <- as.integer(m[3])

+ roi_key <- paste0(sample_name, "_", roi_number)

+

+ if (!is.null(seen_rois[[roi_key]])) {

+ warning(sprintf("Duplicate ROI %s found in class '%s' (already in '%s'), using first occurrence",

+ roi_key, class_name, seen_rois[[roi_key]]))

+ next

+ }

+ seen_rois[[roi_key]] <- class_name

+

+ all_rows[[length(all_rows) + 1L]] <- data.frame(

+ sample_name = sample_name,

+ roi_number = roi_number,

+ file_name = fn,

+ class_name = class_name,

+ stringsAsFactors = FALSE

+ )

+ }

+ }

+

+ if (length(all_rows) == 0) {

+ return(list(

+ annotations = data.frame(

+ sample_name = character(),

+ roi_number = integer(),

+ file_name = character(),

+ class_name = character(),

+ stringsAsFactors = FALSE

+ ),

+ classes_found = character(),

+ sample_names = character()

+ ))

+ }

+

+ annotations <- do.call(rbind, all_rows)

+ rownames(annotations) <- NULL

+

+ list(

+ annotations = annotations,

+ classes_found = sort(unique(annotations$class_name)),

+ sample_names = sort(unique(annotations$sample_name))

+ )

+}

+

#' Filter classifications to only include extracted images

#'

#' Filters a classifications data frame to only include ROIs that have

diff --git a/R/utils.R b/R/utils.R

index d8c3717..4505f3a 100644

--- a/R/utils.R

+++ b/R/utils.R

@@ -136,12 +136,16 @@ load_file_index <- function() {

#' If folder paths are not provided, they are read from saved settings.

#'

#' @param roi_folder Path to ROI data folder. If NULL, read from saved settings.

-#' @param csv_folder Path to classification folder (CSV/MAT). If NULL, read from saved settings.

+#' @param csv_folder Path to classification folder (CSV/H5/MAT). If NULL, read from saved settings.

#' @param output_folder Path to output folder for MAT annotations. If NULL, read from saved settings.

#' @param verbose If TRUE, print progress messages. Default TRUE.

#' @param db_folder Path to the database folder for SQLite annotations. If NULL,

#' read from saved settings; if not found in settings, defaults to

#' \code{\link{get_default_db_dir}()}.

+#' @param data_source Either \code{"local"} (default) for local folder scanning,

+#' or \code{"dashboard"} to fetch the sample list from a remote IFCB Dashboard.

+#' @param dashboard_url When \code{data_source = "dashboard"}, the full Dashboard

+#' URL (e.g. \code{"https://habon-ifcb.whoi.edu/timeline?dataset=tangosund"}).

#' @return Invisibly returns the file index list, or NULL if roi_folder is invalid.

#' @export

#' @examples

@@ -156,12 +160,17 @@ load_file_index <- function() {

#' output_folder = "/data/ifcb/manual"

#' )

#'

+#' # Scan from a remote Dashboard

+#' rescan_file_index(data_source = "dashboard",

+#' dashboard_url = "https://habon-ifcb.whoi.edu/timeline?dataset=tangosund")

+#'

#' # Use in a cron job:

#' # Rscript -e 'ClassiPyR::rescan_file_index()'

#' }

rescan_file_index <- function(roi_folder = NULL, csv_folder = NULL,

output_folder = NULL, verbose = TRUE,

- db_folder = NULL) {

+ db_folder = NULL, data_source = "local",

+ dashboard_url = NULL) {

# Read from saved settings if not provided

if (is.null(roi_folder) || is.null(csv_folder) || is.null(output_folder) ||

is.null(db_folder)) {

@@ -183,6 +192,58 @@ rescan_file_index <- function(roi_folder = NULL, csv_folder = NULL,

db_folder <- get_default_db_dir()

}

+ # Dashboard mode: fetch sample list from remote API

+ if (identical(data_source, "dashboard")) {

+ if (is.null(dashboard_url) || !nzchar(dashboard_url)) {

+ if (verbose) message("Dashboard URL not set")

+ return(invisible(NULL))

+ }

+

+ parsed <- parse_dashboard_url(dashboard_url)

+ if (verbose) message("Fetching bin list from: ", parsed$base_url)

+

+ bins <- tryCatch(

+ list_dashboard_bins(parsed$base_url, parsed$dataset_name),

+ error = function(e) {

+ if (verbose) message("Failed to list dashboard bins: ", e$message)

+ character()

+ }

+ )

+

+ sample_names <- as.character(bins)

+ if (verbose) message(" Found ", length(sample_names), " samples")

+

+ if (length(sample_names) == 0) {

+ if (verbose) message("No samples found on dashboard.")

+ return(invisible(NULL))

+ }

+

+ # Check DB for existing annotations

+ db_path <- get_db_path(db_folder)

+ annotated_db <- list_annotated_samples_db(db_path)

+ annotated <- annotated_db[annotated_db %in% sample_names]

+

+ index_data <- list(

+ data_source = "dashboard",

+ dashboard_url = dashboard_url,

+ dashboard_base_url = parsed$base_url,

+ dashboard_dataset = parsed$dataset_name,

+ sample_names = sample_names,

+ classified_samples = character(),

+ annotated_samples = annotated,

+ roi_path_map = list(),

+ csv_path_map = list(),

+ classifier_mat_files = list(),

+ classifier_h5_files = list(),

+ timestamp = as.character(Sys.time())

+ )

+

+ save_file_index(index_data)

+ if (verbose) message("File index saved to: ", get_file_index_path())

+

+ return(invisible(index_data))

+ }

+

# Validate ROI folder

roi_valid <- !is.null(roi_folder) && length(roi_folder) == 1 &&

!isTRUE(is.na(roi_folder)) && nzchar(roi_folder) && dir.exists(roi_folder)

@@ -221,6 +282,7 @@ rescan_file_index <- function(roi_folder = NULL, csv_folder = NULL,

# Scan classification files

classified <- character()

mat_file_map <- list()

+ h5_file_map <- list()

csv_map <- list()

if (csv_valid) {

@@ -248,9 +310,21 @@ rescan_file_index <- function(roi_folder = NULL, csv_folder = NULL,

}

}

+ h5_files <- list.files(csv_folder, pattern = "_class.*\\.h5$",

+ recursive = TRUE, full.names = TRUE)

+

+ for (h5_file in h5_files) {

+ h5_basename <- basename(h5_file)

+ sample_from_h5 <- sub("_class.*\\.h5$", "", h5_basename)

+ if (sample_from_h5 %in% sample_names) {

+ h5_file_map[[sample_from_h5]] <- h5_file

+ }

+ }

+

mat_samples <- names(mat_file_map)

+ h5_samples <- names(h5_file_map)

csv_matched <- csv_sample_names[csv_sample_names %in% sample_names]

- classified <- unique(c(csv_matched, mat_samples))

+ classified <- unique(c(csv_matched, h5_samples, mat_samples))

if (verbose) message(" Found ", length(classified), " classified samples")

}

@@ -286,6 +360,7 @@ rescan_file_index <- function(roi_folder = NULL, csv_folder = NULL,

roi_path_map = roi_map,

csv_path_map = csv_map,

classifier_mat_files = mat_file_map,

+ classifier_h5_files = h5_file_map,

timestamp = as.character(Sys.time())

)

diff --git a/README.md b/README.md

index 65a6a1d..d07276d 100644

--- a/README.md

+++ b/README.md

@@ -16,6 +16,9 @@ A Shiny application for manual (human) image classification and validation of Im

## Features

- **Dual Mode**: Validate existing classifications or annotate from scratch

+- **Class Review**: Review and reclassify all images of a specific class across the entire database

+- **IFCB Dashboard**: Work directly with remote IFCB Dashboard instances - no local data files needed

+- **Live Prediction**: One-click CNN classification via a remote Gradio API using [iRfcb](https://github.com/EuropeanIFCBGroup/iRfcb)

- **Multiple Formats**: Load from CSV or MATLAB classifier output

- **SQLite Storage**: Annotations stored in a local SQLite database by default - no Python needed

- **Efficient Workflow**: Drag-select, batch relabeling, class filtering

diff --git a/_pkgdown.yml b/_pkgdown.yml

index dda7f38..fe49626 100644

--- a/_pkgdown.yml

+++ b/_pkgdown.yml

@@ -48,10 +48,12 @@ reference:

- load_class_list

- load_from_classifier_mat

- load_from_csv

+ - load_from_h5

- load_from_mat

- load_from_db

- create_new_classifications

- filter_to_extracted

+ - scan_png_class_folder

- title: Sample Saving

desc: Functions for saving annotations and exporting images

contents:

@@ -66,6 +68,7 @@ reference:

- save_annotations_db

- load_annotations_db

- list_annotated_samples_db

+ - list_annotation_metadata_db

- update_annotator

- import_mat_to_db

- import_all_mat_to_db

@@ -73,6 +76,10 @@ reference:

- export_all_db_to_mat

- export_db_to_png

- export_all_db_to_png

+ - import_png_folder_to_db

+ - list_classes_db

+ - load_class_annotations_db

+ - save_class_review_changes_db

- title: File Index Cache

desc: Functions for managing the file index cache for faster startup

contents:

@@ -80,6 +87,18 @@ reference:

- load_file_index

- save_file_index

- rescan_file_index

+- title: Dashboard

+ desc: Functions for working with remote IFCB Dashboard instances

+ contents:

+ - parse_dashboard_url

+ - list_dashboard_bins

+ - download_dashboard_images

+ - download_dashboard_images_bulk

+ - download_dashboard_image_single

+ - download_dashboard_images_individual

+ - download_dashboard_adc

+ - download_dashboard_autoclass

+ - get_dashboard_cache_dir

- title: Utilities

desc: Helper functions for IFCB data processing

contents:

diff --git a/codecov.yml b/codecov.yml

index 02eff64..512fa26 100644

--- a/codecov.yml

+++ b/codecov.yml

@@ -13,7 +13,4 @@ ignore:

- "tests/"

- "docs/"

-comment:

- layout: "reach,diff,flags,files"

- behavior: default

- require_changes: true

+comment: false

diff --git a/inst/CITATION b/inst/CITATION

index 8b93f47..a277ae8 100644

--- a/inst/CITATION

+++ b/inst/CITATION

@@ -9,12 +9,12 @@ bibentry(

comment = c(ORCID = "0000-0002-8283-656X"))

),

year = "2026",

- note = "R package version 0.1.1",

+ note = "R package version 0.2.0",

url = "https://doi.org/10.5281/zenodo.18414999",

textVersion = paste(

"Torstensson, A. (2026).",

"ClassiPyR: A Shiny Application for Manual Image Classification and Validation of IFCB Data.",

- "R package version 0.1.1.",

+ "R package version 0.2.0.",

"https://doi.org/10.5281/zenodo.18414999"

)

)

diff --git a/inst/app/server.R b/inst/app/server.R

index de99b4c..17285fe 100644

--- a/inst/app/server.R

+++ b/inst/app/server.R

@@ -60,7 +60,13 @@ server <- function(input, output, session) {

resource_path_name = NULL, # Session-specific Shiny resource path for images

is_loading = FALSE, # TRUE while loading/saving operations in progress

measure_mode = FALSE, # TRUE when measure tool is active

- pending_sample_select = NULL # Pending sample selection for dropdown update

+ pending_sample_select = NULL, # Pending sample selection for dropdown update

+

+ # Class review mode state

+ class_review_mode = FALSE, # TRUE when in class review mode

+ class_review_class = NULL, # Currently reviewed class name

+ class_review_samples = character(), # Unique sample names in class review

+ class_review_original = NULL # Original classifications snapshot for diff

)

# Settings file for persistence (uses R_user_dir for CRAN compliance)

@@ -104,7 +110,17 @@ server <- function(input, output, session) {

class2use_path = NULL, # Path to class2use file for auto-loading

python_venv_path = NULL, # NULL = use ./venv in working directory

save_format = "sqlite", # "sqlite" (default), "mat", or "both"

- export_statistics = TRUE # Write validation statistics CSV files

+ export_statistics = TRUE, # Write validation statistics CSV files

+ skip_class_png = "", # Class name to exclude from PNG export

+ data_source = "local", # "local" or "dashboard"

+ dashboard_url = "", # IFCB Dashboard URL

+ dashboard_autoclass = FALSE, # Use dashboard auto-classifications for validation

+ gradio_url = "", # Gradio API URL for CNN classification

+ prediction_model = "", # Model name for live prediction

+ dashboard_parallel_downloads = 5,

+ dashboard_sleep_time = 2,

+ dashboard_multi_timeout = 120,

+ dashboard_max_retries = 3

)

if (file.exists(settings_file)) {

@@ -160,7 +176,17 @@ server <- function(input, output, session) {

auto_sync = saved_settings$auto_sync,

python_venv_path = saved_settings$python_venv_path,

save_format = saved_settings$save_format,

- export_statistics = saved_settings$export_statistics

+ export_statistics = saved_settings$export_statistics,

+ skip_class_png = saved_settings$skip_class_png,

+ data_source = saved_settings$data_source,

+ dashboard_url = saved_settings$dashboard_url,

+ dashboard_autoclass = saved_settings$dashboard_autoclass,

+ gradio_url = saved_settings$gradio_url,

+ prediction_model = saved_settings$prediction_model,

+ dashboard_parallel_downloads = saved_settings$dashboard_parallel_downloads,

+ dashboard_sleep_time = saved_settings$dashboard_sleep_time,

+ dashboard_multi_timeout = saved_settings$dashboard_multi_timeout,

+ dashboard_max_retries = saved_settings$dashboard_max_retries

)

# Initialize class dropdown with default class list on startup

@@ -173,10 +199,11 @@ server <- function(input, output, session) {

# Store all sample names and their classification status

all_samples <- reactiveVal(character())

- classified_samples <- reactiveVal(character()) # Auto-classified (CSV or classifier MAT)

+ classified_samples <- reactiveVal(character()) # Auto-classified (CSV, H5, or classifier MAT)

annotated_samples <- reactiveVal(character()) # Manually annotated (has .mat in output folder)

- # Store mapping of sample names to classifier MAT file paths

+ # Store mapping of sample names to classifier MAT/H5 file paths

classifier_mat_files <- reactiveVal(list())

+ classifier_h5_files <- reactiveVal(list())

# Path maps: sample_name -> full file path (discovered during scan)

roi_path_map <- reactiveVal(list())

csv_path_map <- reactiveVal(list())

@@ -221,25 +248,93 @@ server <- function(input, output, session) {

size = "l",

easyClose = TRUE,

- # ── Folder Paths ──────────────────────────────────────────────

- h5("Folder Paths"),

+ # ── Data Source ─────────────────────────────────────────────

+ h5("Data Source"),

+

+ radioButtons("cfg_data_source", NULL,

+ choices = c("Local Folders" = "local",

+ "IFCB Dashboard" = "dashboard"),

+ selected = config$data_source, inline = TRUE),

+

+ # Dashboard settings (visible when data source is "dashboard")

+ conditionalPanel(

+ condition = "input.cfg_data_source == 'dashboard'",

+ textInput("cfg_dashboard_url", "Dashboard URL",

+ value = config$dashboard_url, width = "100%",

+ placeholder = "https://habon-ifcb.whoi.edu/timeline?dataset=tangosund"),

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 10px;",

+ "Enter the IFCB Dashboard URL. Dataset can be specified via ?dataset=name."),

+ checkboxInput("cfg_dashboard_autoclass", "Use dashboard auto-classifications",

+ value = config$dashboard_autoclass),

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 15px;",

+ "When enabled, downloads auto-classification scores from the dashboard for validation mode."),

+

+ # Advanced download settings (collapsible)

+ tags$details(

+ tags$summary(style = "cursor: pointer; margin-bottom: 10px; color: #666;",

+ "Advanced Download Settings"),

+ fluidRow(

+ column(6, numericInput("cfg_dashboard_parallel_downloads",

+ "Parallel Downloads",

+ value = config$dashboard_parallel_downloads,

+ min = 1, max = 20, step = 1)),

+ column(6, numericInput("cfg_dashboard_sleep_time",

+ "Sleep Time (seconds)",

+ value = config$dashboard_sleep_time,

+ min = 0, max = 30, step = 0.5))

+ ),

+ fluidRow(

+ column(6, numericInput("cfg_dashboard_multi_timeout",

+ "Download Timeout (seconds)",

+ value = config$dashboard_multi_timeout,

+ min = 10, max = 600, step = 10)),

+ column(6, numericInput("cfg_dashboard_max_retries",

+ "Max Retries",

+ value = config$dashboard_max_retries,

+ min = 1, max = 10, step = 1))

+ ),

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 15px;",

+ "Settings for zip/ADC/autoclass downloads from the dashboard.")

+ )

+ ),

+

+ # ── Classification Folder (both modes) ──────────────────────────

+ h5("Classification"),

div(

- style = "display: flex; gap: 5px; align-items: flex-end; margin-bottom: 15px;",

+ style = "display: flex; gap: 5px; align-items: flex-end; margin-bottom: 5px;",

div(style = "flex: 1;",

- textInput("cfg_csv_folder", "Classification Folder (CSV/MAT)",

+ textInput("cfg_csv_folder", "Classification Folder (CSV/H5/MAT)",

value = config$csv_folder, width = "100%")),

shinyDirButton("browse_csv_folder", "Browse", "Select Classification Folder",

class = "btn-outline-secondary", style = "margin-bottom: 15px;")

),

- div(

- style = "display: flex; gap: 5px; align-items: flex-end; margin-bottom: 15px;",

- div(style = "flex: 1;",

- textInput("cfg_roi_folder", "ROI Data Folder",

- value = config$roi_folder, width = "100%")),

- shinyDirButton("browse_roi_folder", "Browse", "Select ROI Data Folder",

- class = "btn-outline-secondary", style = "margin-bottom: 15px;")

+ conditionalPanel(

+ condition = "input.cfg_data_source == 'dashboard'",

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 5px;",

+ "Optional. Use local classification files instead of dashboard auto-classifications.")

+ ),

+

+ checkboxInput("cfg_use_threshold", "Apply classification threshold",

+ value = config$use_threshold),

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 15px;",

+ "When enabled, classifications below the confidence threshold are marked as 'unclassified'."),

+

+ # ── Folder Paths (local mode only) ──────────────────────────────

+ conditionalPanel(

+ condition = "input.cfg_data_source == 'local'",

+

+ h5("ROI Data"),

+

+ div(

+ style = "display: flex; gap: 5px; align-items: flex-end; margin-bottom: 15px;",

+ div(style = "flex: 1;",

+ textInput("cfg_roi_folder", "ROI Data Folder",

+ value = config$roi_folder, width = "100%")),

+ shinyDirButton("browse_roi_folder", "Browse", "Select ROI Data Folder",

+ class = "btn-outline-secondary", style = "margin-bottom: 15px;")

+ )

),

div(

@@ -320,41 +415,60 @@ server <- function(input, output, session) {

# ── Import / Export ────────────────────────────────────────────

h5("Import / Export"),

+ tags$label("Import to SQLite", style = "font-weight: 600; display: block; margin-bottom: 5px;"),

div(

- style = "display: flex; gap: 10px; margin-bottom: 8px;",

- actionButton("import_mat_to_db_btn", "Import .mat \u2192 SQLite",

- icon = icon("database"), class = "btn-outline-secondary btn-sm"),

- actionButton("export_db_to_mat_btn", "Export SQLite \u2192 .mat",

- icon = icon("file-export"), class = "btn-outline-secondary btn-sm"),

- actionButton("export_db_to_png_btn", "Export SQLite \u2192 PNG",

- icon = icon("image"), class = "btn-outline-secondary btn-sm")

+ style = "display: flex; gap: 10px; margin-bottom: 5px;",

+ actionButton("import_mat_to_db_btn", ".mat \u2192 SQLite",

+ icon = icon("file-import"), class = "btn-outline-secondary btn-sm"),

+ actionButton("import_png_to_db_btn", "PNG \u2192 SQLite",

+ icon = icon("file-import"), class = "btn-outline-secondary btn-sm")

),

- tags$small(class = "text-muted",

- "Bulk import/export all annotated samples between storage formats.",

- "PNG export extracts images into class-name subfolders."),

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 10px;",

+ "Bulk import annotated samples from .mat files or PNG class folders."),

+ tags$label("Export from SQLite", style = "font-weight: 600; display: block; margin-bottom: 5px;"),

div(

- style = "margin-top: 8px;",

+ style = "display: flex; gap: 10px; margin-bottom: 5px;",

+ actionButton("export_db_to_mat_btn", "SQLite \u2192 .mat",

+ icon = icon("file-export"), class = "btn-outline-secondary btn-sm"),

+ actionButton("export_db_to_png_btn", "SQLite \u2192 PNG",

+ icon = icon("file-export"), class = "btn-outline-secondary btn-sm")

+ ),

+ div(

+ style = "margin-bottom: 5px;",

textInput("cfg_skip_class_png", "Skip class in PNG export",

- value = if (!is.null(rv$class2use) && length(rv$class2use) > 0) rv$class2use[1] else "",

+ value = if (nzchar(config$skip_class_png)) config$skip_class_png

+ else if (!is.null(rv$class2use) && length(rv$class2use) > 0) rv$class2use[1]

+ else "",

width = "250px"),

tags$small(class = "text-muted",

"Images with this class are excluded from PNG export.",

- "Pre-filled with the first class in your class list.",

"Leave empty to export all classes.")

),

hr(),

+ # ── Live Prediction ─────────────────────────────────────────

+ h5("Live Prediction"),

+

+ textInput("cfg_gradio_url", "Gradio API URL",

+ value = config$gradio_url, width = "100%",

+ placeholder = "https://irfcb-classify.hf.space"),

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 10px;",

+ "Enter Gradio API URL for CNN classification. Example: https://irfcb-classify.hf.space"),

+

+ selectInput("cfg_prediction_model", "Prediction Model",

+ choices = if (nzchar(config$prediction_model)) config$prediction_model else NULL,

+ selected = if (nzchar(config$prediction_model)) config$prediction_model else NULL,

+ width = "100%"),

+ tags$small(class = "text-muted", style = "display: block; margin-bottom: 15px;",

+ "Select a CNN model for classification. Models are fetched from the Gradio API."),

+

+ hr(),

+

# ── IFCB Options ──────────────────────────────────────────────

h5("IFCB Options"),

- checkboxInput("cfg_use_threshold", "Apply classification threshold",

- value = config$use_threshold),

- tags$small(class = "text-muted",

- "Only applies to ifcb-analysis MATLAB classifier output (*_class*.mat).",

- "When enabled, classifications below the confidence threshold are marked as 'unclassified'."),

-

div(

style = "display: flex; gap: 10px; align-items: center; margin-top: 10px;",

numericInput("cfg_pixels_per_micron", "Pixels per micron",

@@ -429,9 +543,35 @@ server <- function(input, output, session) {

}

})

-

+ # Fetch available models when Gradio URL changes in settings (debounced)

+ gradio_url_debounced <- debounce(reactive(input$cfg_gradio_url), 1500)

+

+ observeEvent(gradio_url_debounced(), {

+ url <- gradio_url_debounced()

+ if (is.null(url) || !nzchar(url)) {

+ updateSelectInput(session, "cfg_prediction_model", choices = character(0))

+ return()

+ }

+ tryCatch({

+ models <- iRfcb::ifcb_classify_models(url)

+ if (length(models) > 0) {

+ # Preserve current selection if still valid

+ current <- config$prediction_model

+ selected <- if (nzchar(current) && current %in% models) current else models[1]

+ updateSelectInput(session, "cfg_prediction_model",

+ choices = models, selected = selected)

+ } else {

+ updateSelectInput(session, "cfg_prediction_model", choices = character(0))

+ showNotification("No models found at the provided URL.", type = "warning")

+ }

+ }, error = function(e) {

+ updateSelectInput(session, "cfg_prediction_model", choices = character(0))

+ showNotification(paste("Could not fetch models:", e$message), type = "error")

+ })

+ })

+

# Class count display

-

+