This repository presents a comprehensive deep learning solution for Fine-Grained Car Type Classification using the challenging Stanford Cars Dataset. The core objective is to accurately distinguish between 196 visually similar car makes and models (e.g., different trims or model years), a task that demands highly discriminative feature extraction.

The project implements and rigorously evaluates four state-of-the-art Convolutional Neural Network (CNN) architectures to compare their performance, scalability, and efficiency on this complex fine-grained task.

- Name: Stanford Cars Dataset

- Source: Hugging Face Dataset Link

- Classes: 196 unique car makes and models.

- Total Images: 16,185 (8,144 training, 8,041 testing).

Four distinct models were selected to represent different architectural philosophies and implementation strategies:

| Model | Implementation Strategy | Key Architectural Feature |

|---|---|---|

| ResNet-50 | Transfer Learning (Fine-Tuning) | Residual Blocks (Skip Connections) to enable deep learning. |

| Inception V1 (GoogLeNet) | Transfer Learning (Fine-Tuning) | Inception Modules for multi-scale feature extraction. |

| MobileNetV2 | Transfer Learning (Fine-Tuning) | Inverted Residual Blocks for high efficiency and low latency. |

| VGG-19 | Implemented from scratch with Batch Normalization. | Uniform 3x3 convolutional filters to test depth importance. |

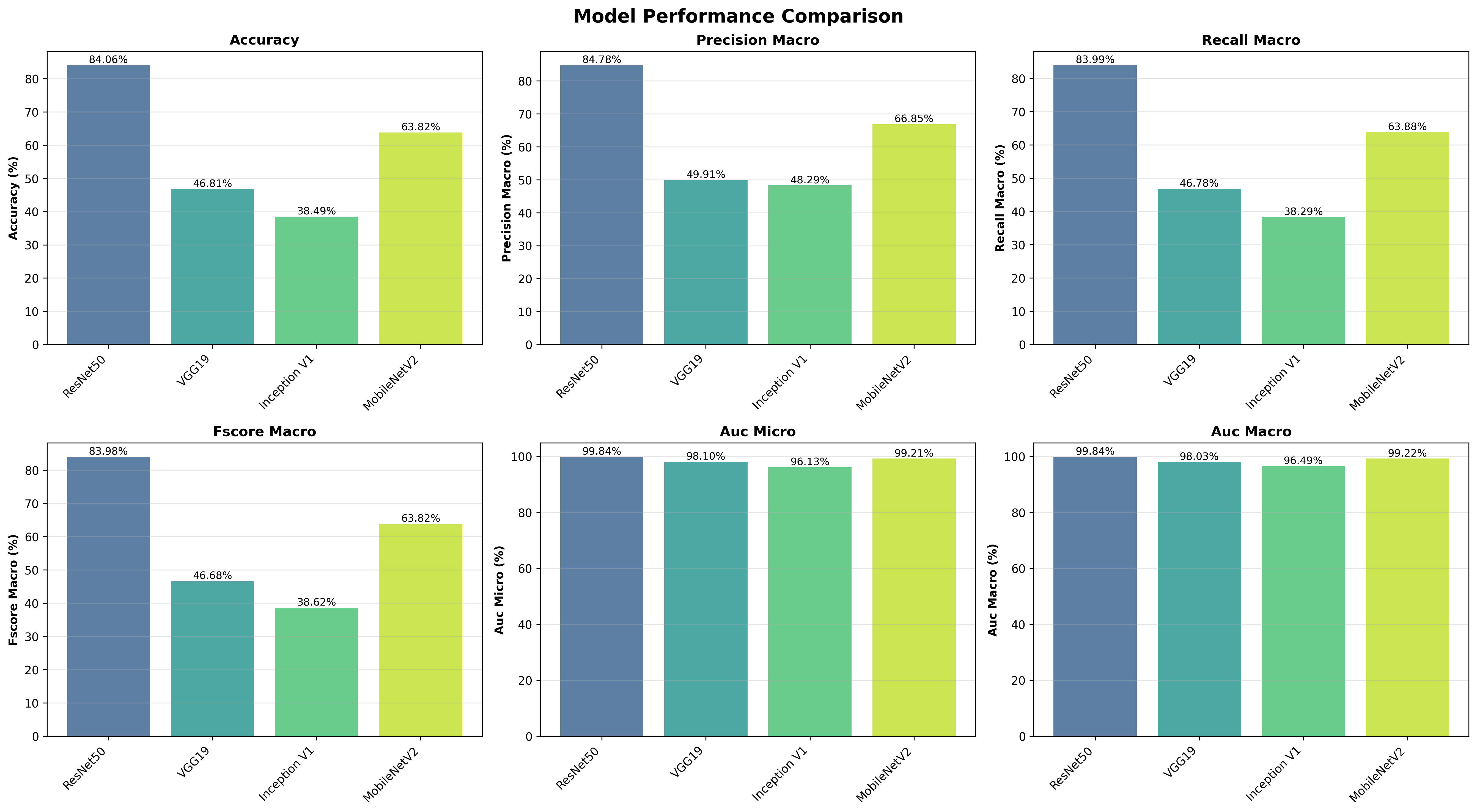

The models were evaluated across three complexity levels (10, 20, and 196 classes). The ResNet-50 model, leveraging deep residual learning and ImageNet pre-trained weights, achieved the highest performance on the full dataset.

| Model | Accuracy | F1-Score (Macro) | AUC (Macro) |

|---|---|---|---|

| ResNet-50 | 84.06% | 83.98% | 99.84% |

| MobileNetV2 | 63.82% | 63.82% | 99.22% |

| VGG-19 (Scratch) | 46.81% | 46.68% | 98.03% |

| Inception V1 | 38.49% | 38.62% | 96.49% |

The superior performance of ResNet-50 is attributed to its robust ability to learn highly discriminative features for fine-grained classification, demonstrating excellent scalability and stability as the number of classes increases.

The trained model weights and checkpoints for all four architectures (ResNet-50, VGG-19, Inception V1, and MobileNetV2) are too large to host directly on GitHub.

They have been securely uploaded and are available for download via the following Google Drive link:

Download All Model Checkpoints

Note: These weights are necessary to run the final evaluation scripts (Evaluation_XXX_classes.py) and reproduce the results presented in the documentation.

The project is organized into modular directories based on the classification complexity, ensuring clear separation of code and results.

/Car_Type_Classification_Description/

├── 196_classes/

│ ├── Data Preprocessing_196_classes.py # Data loading, augmentation, and splitting

│ ├── VGG-19_196_classes.ipynb # VGG-19 (from scratch) training notebook

│ ├── ResNet_196_classes.ipynb # ResNet-50 (transfer learning) training notebook

│ ├── InceptionV1_196_classes.ipynb # Inception V1 (transfer learning) training notebook

│ ├── MobileNetV2_196_classes.ipynb # MobileNetV2 (transfer learning) training notebook

│ ├── Evaluation_196_classes.py # Script to generate all metrics and visualizations

│ └── Evaluation Results_196_classes/ # All output metrics, confusion matrices, and plots

├── 20_classes/ # Code and results for the 20-class subset

├── 10_classes/ # Code and results for the 10-class subset

├── Car_Classification_Documentation.pdf # The comprehensive academic report (Final Deliverable)

├── Project Requirements/ # Original project requirement documents

└── requirements.txt # Project dependencies for environment setup

└── README.md # This file

- Python 3.8+

- PyTorch & Torchvision

- Hugging Face

datasetslibrary - Standard scientific computing libraries (

pandas,numpy,matplotlib,scikit-learn)

-

Clone the repository:

git clone https://github.com/MalakAlaa2004/Car_Type_Classification_Description.git cd car_type_Classification_Description -

Install dependencies:

pip install -r requirements.txt

The project uses Jupyter Notebooks for training and a dedicated script for final evaluation.

- Training: Open and execute the desired training notebook (e.g.,

196_classes/ResNet_196_classes.ipynb) to train the model and save the weights. - Evaluation: Run the evaluation script to generate all final metrics and visualizations:

All generated results are saved in the respective

python 196_classes/Evaluation_196_classes.py

Evaluation Results_XXX_classesdirectory.

For a detailed breakdown of the methodology, architectural explanations (with diagrams and citations), in-depth comparative analysis, and discussion on the 10-class and 20-class trials, please refer to the final academic report: