Suspending a VM before snapshot deletion (see PR #3193)#3194

Conversation

… storage (see PR #3193)

|

two questions |

|

Hello and thank you for your attention. Here are the answers.

The VM gets resumed right after the snapshot deletion procedure:

The VM is being paused when the snapshot is being created, but then it resumes its work, so the snapshot is being copied to the secondary storage, some time passes and then the snapshot is being deleted from the image file without suspension of the VM, which could be quite a risky maneuver. |

|

@Melnik13 thanks for your reply. |

|

Hello, I saw how it happens about a dozen times, really. When it happened to VMs that are being constantly monitored by Nagios (there were at least 2 occurrences), I saw that these VM were working fine during the "backing up" period (when their snapshots were copied to the secondary storage), but they failed right after their snapshots had been deleted from their volumes' images on primary storage. Sometimes it's possible to repair these images with |

|

We have also had corrupted VMs. We are on 4.9.3 with CentOS/KVM. I can't say which part of the snapshot process is killing our VMs - but it has been horrible to deal with. We are using NFS |

|

@Melnik13 good, thanks for your reply. |

|

@Melnik13 by the way ,could you please add another commit to suspend vm when delete a vm snapshot ? |

|

@Melnik13 I just wonder if there are any steps to reproduce this corruption? could a debugger and step by step execution come handy here? |

|

Hello, Has there been any more progress on this? |

|

@borisstoyanov push high io to vm vol and overall storage and try playing with volume snapshots. After doing some of them 10-20+, adding new and removing old (e.g. scheduled scenario) stop vm and you have a chance it will not boot because of corrupted qcow2 image. |

|

@Melnik13 any updates on this PR? |

|

Dear colleagues, sorry for the pause! On the 29th of April, I'm going to install the patched version in one of my production environments to make sure that my patch couldn't harm anything. I tested it in the lab, but I'd also like to check in a populous environment. |

|

Hello, how's it going so far? Any more corruptions? |

|

@Melnik13 any updates? |

|

@blueorangutan package |

|

@rhtyd a Jenkins job has been kicked to build packages. I'll keep you posted as I make progress. |

|

Packaging result: ✔centos6 ✔centos7 ✔debian. JID-2810 |

|

Dear colleagues, I'm really sorry for such a long pause. There are certain conditions that you would consider as a "good excuse", so I hope you won't blame me too much for my silence. Thanks! |

|

@blueorangutan test centos7 kvm-centos6 |

|

Trillian test result (tid-3610)

|

|

@Melnik13 @rhtyd Here is a link to an article talking about not using qemu-img to take snapshots of running VMs (there are lots of other posts/pages agreeing). https://www.cyberciti.biz/faq/how-to-create-create-snapshot-in-linux-kvm-vmdomain/ Should we be suspending the VM before taking the snapshot? Is suspending enough to prevent corruption or is it just "safer"? We are just very gun shy with snapshots on KVM after experiencing corruption on several VMs... Thanks for any input! |

You're absolutely right, but ACS do not use qemu-img to create a snapshot of a running instance. It runs qemu-img only if the instance is stopped, but for the running one it calls libvirt to perform the job (and libvirt always suspends the VM). The problem occurs when ACS is deleting the snapshot from the running instance's volume. When the deletion procedure is being performed, libvirt doesn't suspend an instance (well, the instance is being frozen somehow, but As I think, it's not a bug of ACS, as it seems to be a bug of libvirt or QEMU/KVM (by the way, users of Proxmox are facing these issues too), but our workaround (manually suspending the instance before the snapshot deletion) could help us to outflank the problem. Thanks. |

|

@blueorangutan test |

|

@rhtyd a Trillian-Jenkins test job (centos7 mgmt + kvm-centos7) has been kicked to run smoke tests |

|

code lgtm, tested ok. |

|

Trillian test result (tid-3618)

|

|

@Melnik13 we can merge this as soon as @ustcweizhou 's review comment is addressed. Thanks. |

Thank you for your comment. Where it would be better to add this information? I'd also like to mention that nothing will be changed from the point of view of a user, because an instance is actually being frozen when the snapshot is being deleted. We suspect that the instance isn't being "properly" suspended (the instance doesn't react to anything, but |

|

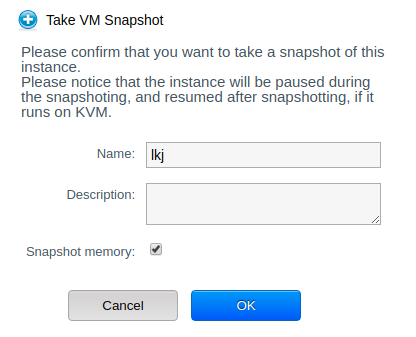

@Melnik13 perhaps you can send a PR towards cloudstack-documentation https://github.com/apache/cloudstack-documentation and in CloudStack UI, when we see the create/delete vm snapshot form/dialog it can say the operation will pause the guest VM. |

|

@Melnik13 We already have some message in ui/l10n/ to warn users that "Please notice that the instance will be paused during the snapshoting, and resumed after snapshotting, if it runs on KVM." when create a vm snapshot. |

anuragaw

left a comment

anuragaw

left a comment

There was a problem hiding this comment.

LGTM based on code and discussions here.

|

@rhtyd good |

…he#3194) To make sure that a qemu2-image won't be corrupted by the snapshot deletion procedure which is being performed after copying the snapshot to a secondary store, I'd propose to put a VM in to suspended state. Additional reference: https://bugzilla.redhat.com/show_bug.cgi?id=920020#c5 Fixes apache#3193 (cherry picked from commit c94ee14)

…n, th… (#4032) These changes are related to PR #3194, but include suspending/resuming the VM when doing a VM snapshot as well, when deleting a VM snapshot, as it is performing the same operations via Libvirt. Also, there was an issue with the UI/localization changes in the prior PR, as that PR was altering the Volume snapshot behavior, but was altering the VM snapshot wording. Both have been altered in this PR. Issuing this in response to the work happening in PR #4029.

Description

To make sure that a qemu2-image won't be corrupted by the snapshot deletion procedure which is being performed after copying the snapshot to a secondary store, I'd propose to put a VM in to

suspendedstate.Fixes: #3193

Types of changes

How Has This Been Tested?

I tried it in my environment, a VM had been suspended, the snapshot had been removed and then the VM's work had been resumed.