This project is being developed under QMIND as a design team part of the DAIR division. DAIR @ QMIND

We aim to develop a Tensorflow model to predict the 3D shape and pose of two-hands through high hand-to-hand and hand-to-object contact, using just a monocular RGB input. This project is still under development - for anything regarding this project, see the project roadmap.

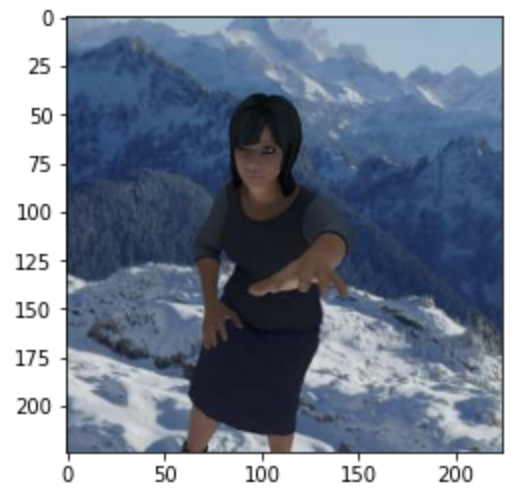

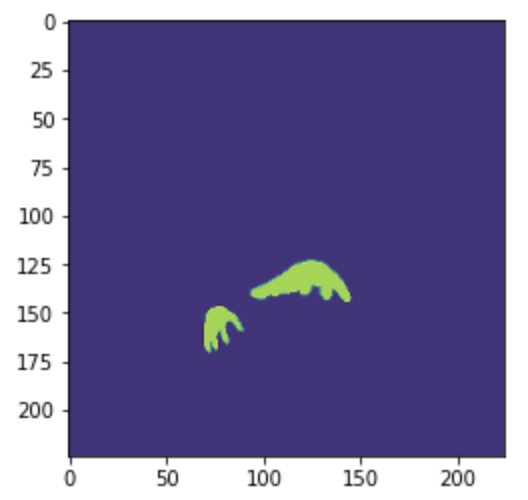

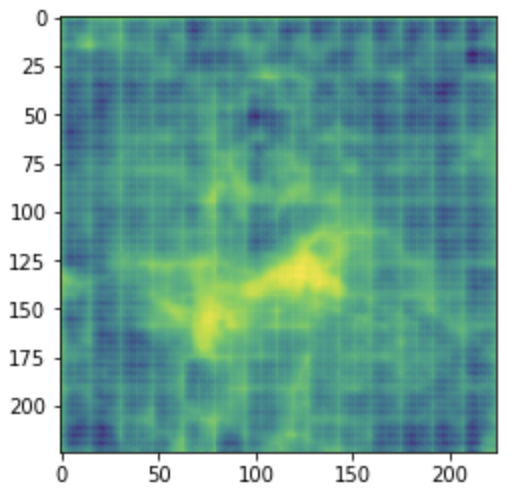

Below we present our preliminary results, that being an implementation of UNET to predict the segmentation mask of images from the RHD dataset.

Pictured from Left -> Right

Input image, Ground truth segmentation mask, Model prediction

Simply clone the repo and run all code blocks in src/HandTracking.ipynb. Pay careful attention to any comments at the top of the code blocks, as some are only meant to run when using the project from within Google Colab.